1

Remote sensing and automatic earth monitoring are key to solve global-scale challenges such as disaster prevention, land use …

Recent metabolomics measurement devices, such as mass spectrometers, produce extremely high-dimensional data. Together with small …

Recent neural text-to-SQL models can effectively translate natural language questions to corresponding SQL queries on unseen databases. …

Negation is a core construction in natural language. Despite being very successful on many tasks, state-of-the-art pre-trained language …

Multi-Task Learning (MTL) networks have emerged as a promising method for transferring learned knowledge across different tasks. …

The COVID-19 pandemic has spread rapidly worldwide, overwhelming manual contact tracing in many countries and resulting in widespread …

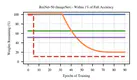

Recent work has explored the possibility of pruning neural networks at initialization. We assess proposals for doing so: SNIP (Lee et …

Action and observation delays commonly occur in many Reinforcement Learning applications, such as remote control scenarios. We study …

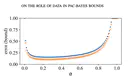

The dominant term in PAC-Bayes bounds is often the Kullback–Leibler divergence between the posterior and prior. For so-called …

We propose a stochastic variant of the classical Polyak step-size (Polyak, 1987) commonly used in the subgradient method. Although …