1

AI technologies are moving rapidly from research to production. With the popularity of Foundation Models (FMs) that generate text, …

Research on trust in AI is limited to several trustors (e.g., end-users) and trustees (especially AI systems), and empirical …

Multimodal AI has the potential to significantly enhance document-understanding tasks, such as processing receipts, understanding …

Data analytics is essential for extracting valuable insights from data that can assist organizations in making effective decisions. We …

Text embeddings are typically evaluated on a narrow set of tasks, limited in terms of languages, domains, and task types. To circumvent …

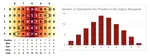

We introduce Visual Caption Restoration (VCR), a novel vision-language task that challenges models to accurately restore partially …

Scalable Vector Graphics (SVGs) are vital for modern image rendering due to their scalability and versatility. Previous SVG generation …

Abstention Ability (AA) is a critical aspect of Large Language Model (LLM) reliability, referring to an LLM’s capability to …